Here’s your weekly dose of treats 💌

A weekly lists of goodies curated by Robert.

Follow the white rabbit 🐇

.

.

.

🎶 Something to listen while reading

Complex, fiery and divisive stuff I’ve been reading

The antidote to fake news is to nourish our epistemic wellbeing

There are three components of epistemic wellbeing: access to truths; access to trustworthy sources of information; and opportunities to participate in productive dialogue. Let’s think about these each in turn.A Simple 2021 Reboot — My Short Letter to a Friend Who Wants to Get In Shape by Tim Ferriss.

This open ear headset is pretty cool.

Select a muscle and it provides the exercises to workout the selected muscle.

A mindfulness based social network designed to be checked once a week.

Why Do We Keep Reading The Great Gatsby? I did not enjoy this book. Not sure why people do.

I've been listening to some rare grooves around the world on vinyl. The YouTube Channel is called My Analog Journal. The one I enjoyed is called Guest Mix: Records from Brazil with Poly-Ritmo

Mad Fientist’s ultralearning experiment — he tasked himself with writing a song in three months. Since those three months expired, he has gone on to produce an entire album. For more on ultralearning, check out the Mad Fientist's interview with ultralearner Scott Young.

New Report on How Much Computational Power It Takes to Match the Human Brain

Imitating Interactive Intelligence

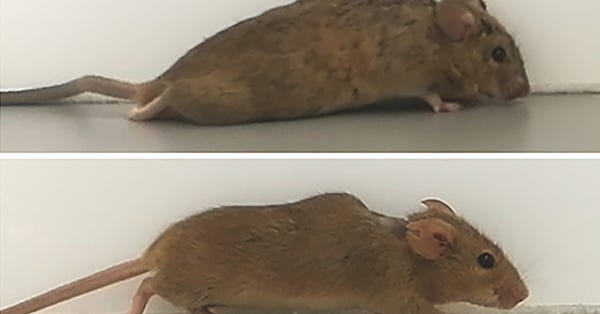

A common vision from science fiction is that robots will one day inhabit our physical spaces, sense the world as we do, assist our physical labours, and communicate with us through natural language. Here we study how to design artificial agents that can interact naturally with humans using the simplification of a virtual environment.Biotechnology Research Viewed With Caution Globally, but Most Support Gene Editing for Babies To Treat Disease. Majorities across global publics accept evolution; religion factors prominently in belief

Thinking ahead: prediction in context as a keystone of language in humans and machines

Departing from classical rule-based linguistic models, advances in deep learning have led to the development of a new family of self-supervised deep language models (DLMs)

After training, autoregressive DLMs are able to generate new “context-aware” sentences with appropriate syntax and convincing semantics and pragmatics. Here we provide empirical evidence for the deep connection between autoregressive DLMs and the human language faculty using a 30-min spoken narrative and electrocorticographic (ECoG) recordings.

👽 My latest YouTube video

🐦 Tweets for thought

.

.

.

Thank you for reading 🤜🤛

If you enjoyed this, maybe I can tempt you with my YouTube channel.

My website is here.

💌 My Favorite Links: articles I've enjoyed, podcasts, tech, software, ideas, and personal philosophies.

I do share some thoughts on 📰 Instagram & on 🐦 Twitter.

✍️ Medium at some point.

🔴 Discord server for the community.

Again, you can also reach out via Instagram, Twitter, or using gravitational waves.

That said, how’re you and yours doing this week? Any major changes to your status quo, or are things fairly locked-in and predictable at the moment?

I respond to every email I get—consider sending me a message and telling me a bit about yourself and what’s been up in your world.

—

Have a great day ahead!